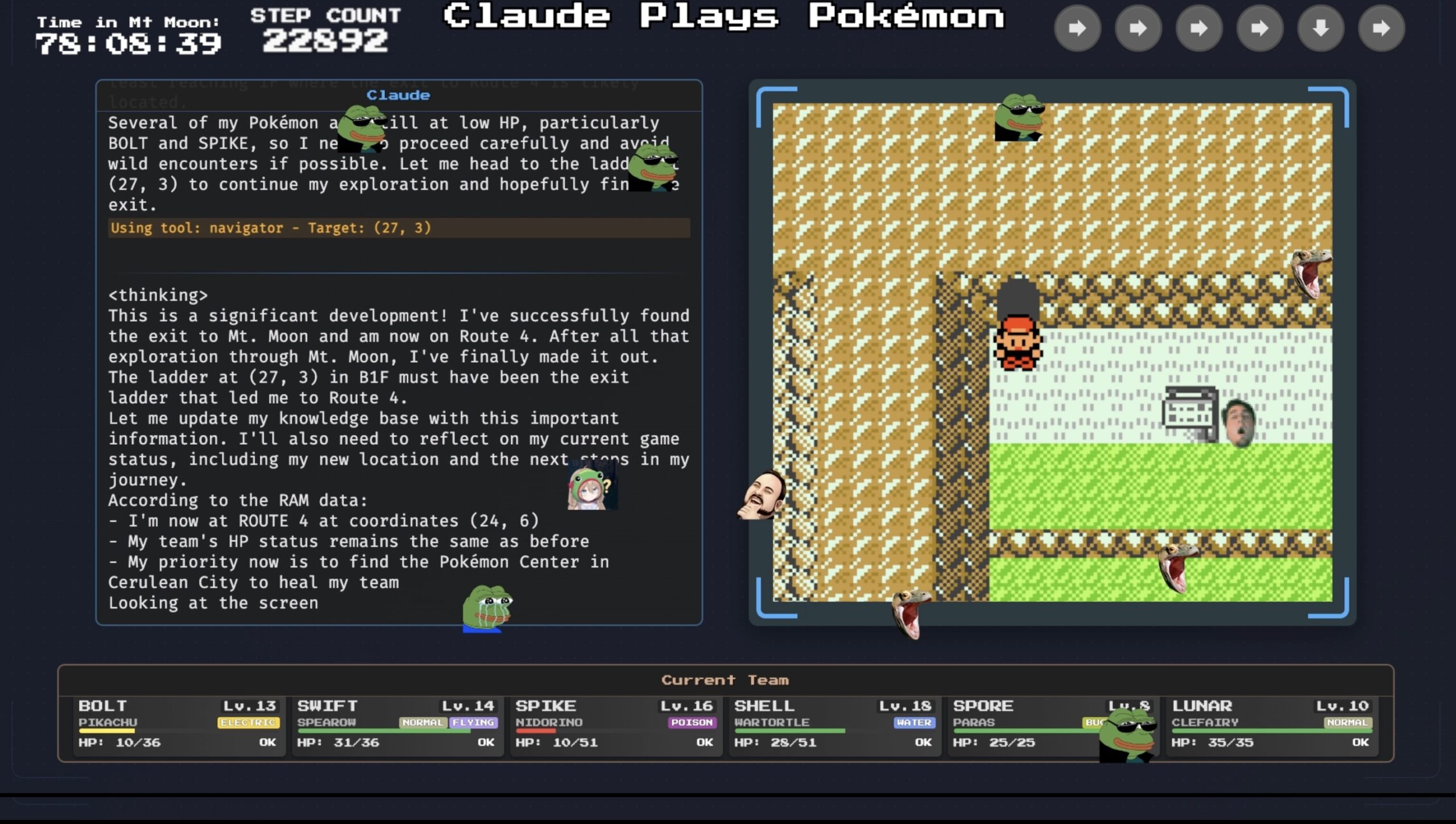

The place earlier fashions wandered aimlessly or received caught in loops, Claude 3.7 Sonnet plans forward, remembers its targets, and adapts when preliminary methods fail.

Essential expertise for battling pixelated health club leaders. And, we posit, in fixing real-world issues too. pic.twitter.com/scvISp14XG

— Anthropic (@AnthropicAI) February 25, 2025

One of many largest issues stopping the present model of Claude from getting higher, Hershey mentioned, is that “when it derives that good technique, I do not suppose it essentially has the self-awareness to know that one technique [it] got here up with is best than one other.” And that’s not a trivial drawback to unravel.

Nonetheless, Hershey mentioned he sees “low-hanging fruit” for bettering Claude’s Pokémon play by bettering the mannequin’s understanding of Recreation Boy screenshots. “I believe there’s an opportunity it might beat the sport if it had an ideal sense of what is on the display screen,” Hershey mentioned, saying that such a mannequin would most likely carry out “just a little bit in need of human.”

Increasing the context window for future Claude fashions will even most likely enable these fashions to “purpose over longer time frames and deal with issues extra coherently over an extended time period,” Hershey mentioned. Future fashions will enhance by getting “just a little bit higher at remembering, maintaining monitor of a coherent set of what it must attempt to make progress,” he added.

No matter you consider impending enhancements in AI fashions, although, Claude’s present efficiency at Pokémon doesn’t make it seem to be it’s poised to usher in an explosion of human-level, fully generalizable synthetic intelligence. And Hershey permits that watching Claude 3.7 Sonnet get caught on Mt. Moon for 80 hours or so could make it “seem to be a mannequin that does not know what it is doing.”

However Hershey continues to be impressed on the method that Claude’s new reasoning mannequin will often present some glimmer of consciousness and “form of inform that it would not know what it is doing and know that it must be doing one thing totally different. And the distinction between ‘cannot do it in any respect’ and ‘can form of do it’ is a fairly large one for these AI issues for me,” he continued. “You already know, when one thing can form of do one thing it usually means we’re fairly near getting it to have the ability to do one thing actually, rather well.”